November 2025 Agent Use: "Let's try it"

My new mantra with AI coding tools

This is a snapshot of how I’m evolving my LLM-assisted engineering workflow — what’s working, what’s not, and the experiments I’m running.

Mantra: Let’s try it

This is my LLM mantra lately. I talk to it for a bit, branch the repo I’m working on, and just let it go. It’s easy to:

- Just get started on something

- Code MVP (Agent) → Review (me) → Learn (me) →

- Option 1: Wipe the current branch and restart

- Option 2: Continue iteration b/c it works great

Agent Preference: Codex CLI && Claude Opus 4.5

UPDATE 11/26/25 - Still November: Of COURSE like a couple days after I released this… But I’m really liking Opus 4.5. It’s fast and has been knocking out tasks in a way I appreciate/trust way more than Sonnet.

Previously, I was on Claude Code (Sonnet) but Codex has been solid for me, so much so that I’ve upgraded to the Pro plan to get way more usage. A non-insignificant amount of times I find Codex 1-2-shotting a fix/feature that Claude is struggling on.

It comes down to trust and trust is earned. I currently trust Codex to do a better job with the way I work right now. 2 months ago, that was not true.

NOTE: As of now, I haven’t dug into Gemini code tools. They were broken during the first week of launch for me and quick “dip my toe in” tests were underwhelming and sometimes bad.

New Workflows I’m Testing

Co-working Out Loud

This has been the most impactful this past week. It’s very “heavy” from a context standpoint, but the results don’t lie. How this works:

- David and I get on a video call to run a process. (the Event funnel this week, < 1.5 hours total)

- He shares his screen and talks out loud about the process he’s running.

- We hit “slog” parts. For each part:

- Firing off LLM code features that I can fix in <20 min (during call)

- Digging into the details with him to understand more (during call)

- Start 1-2 medium size projects that I will work on for the next 24 hours (post call)

- Agree with him that it’s not worth changing (during call)

RESULT: Solo slog → Small team immediate progress

PROOF: David called me just to tell how he was enjoying the events funnel now that there’s massive momentum in the tools.

Internal MCPs for n8n to Consume

We don’t have a ton of resources at my company so we have to find clever ways to get work done. David (Offline owner) has been deep in n8n lately and has slowly pulled me into the wonderful world of workflows. While I do see the benefit, the “slog” that’s happening right now is the tools need to get data from one place to another.

This week, I build a small MCP + some other tools for one of our internal apps that will hopefully reduce the time it takes to organize Offline Events from a couple hours, to 10’s of minutes per event.

RESULT: 2-hour API + MCP Work

PROOF: TBD

AI Style Guide Markdown

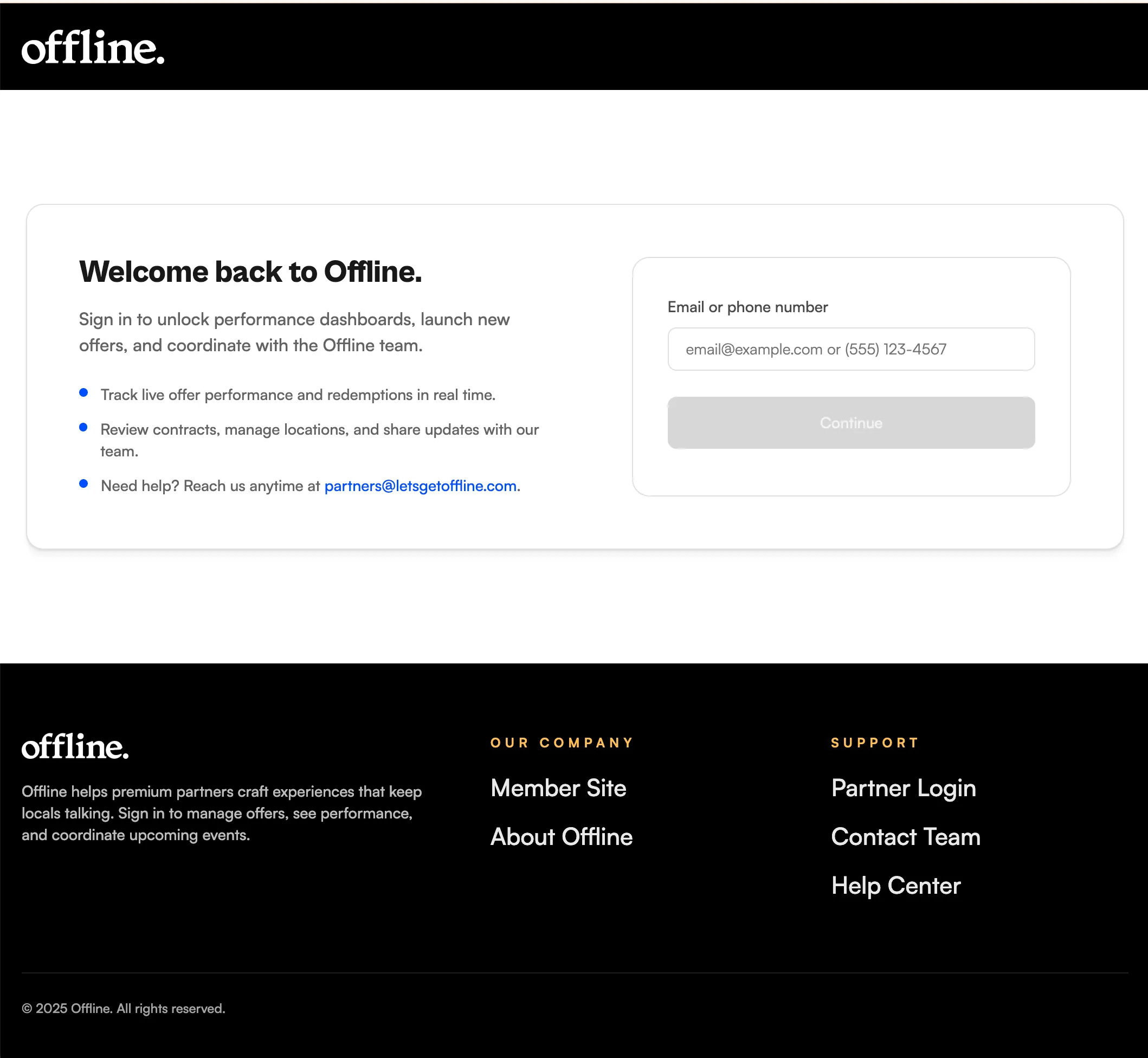

At Offline, we’re testing a shared AI friendly style guide. We:

- Extracted the styles from our landing page

- Had AI generate a repo with visuals for humans and markdown for LLMs

- Added mini scripts in the various repos we have to check the version and pull down a copy if it changed.

It’s been ok so far. We’ve mainly run into it filling in the gaps in ways we didn’t like. But, once we fill those gaps in the AI doc with our preferred preferences, this should get better over time.

Anthropic just released an article about Improving frontend design through Skills that is adjacent to how we’re thinking.

RESULT: Better first-try AI designs.

PROOF: The Post-AI style is a bit more aligned, uses the header/footer properly, and only took 1-2 prompts to get right.

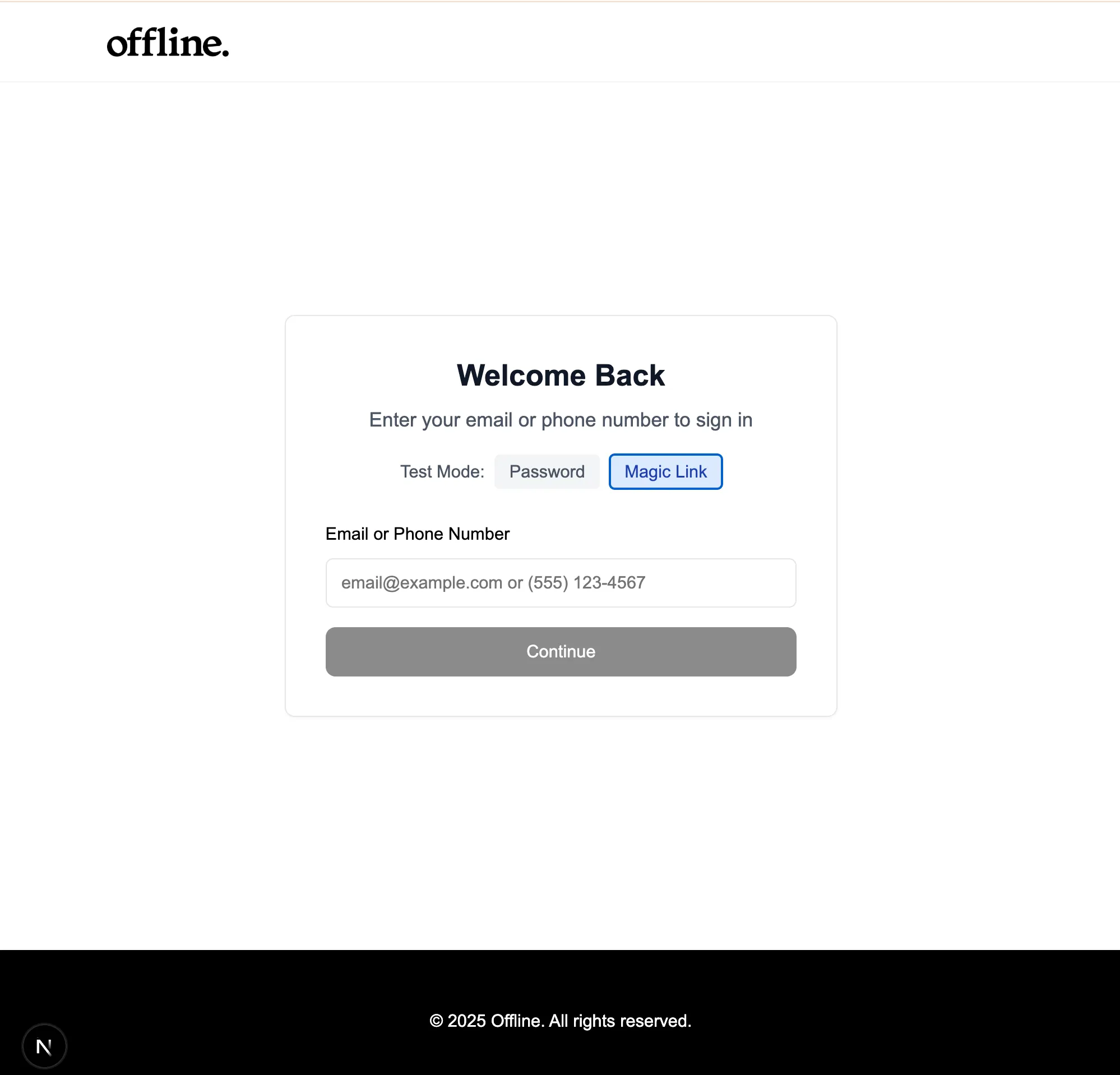

Login Page Pre-AI Style Guide

Login Page Post-AI Style Guide

*slog - that feeling you have when you know something should be faster, but your brain starts to be at 200% CPU capacity.